Eli Bendersky's website » Life of an instruction in LLVM

Life of an instruction in LLVM

November 24th, 2012 at 3:37 pm

LLVM is a complex piece of software. There are several paths one may take on the quest of

understanding how it works, none of which is simple. I recently had to dig in some areas of LLVM

I was not previously familiar with, and this article is one of the outcomes of this quest.

LLVM は複雑な piece of software です。LLVM がどのような動作をしているのかを理解するには

いくつかの経路 (path) がありますが、単純なものはありません。最近、わたしは LLVM の一部について

dig in しなければなりませんでした。それまでわたしは LLVM には馴染みがありませんでしたから、

この article はその quest の one of the outcomes です。

What I aim to do here is follow the various incarnations an "instruction"

takes when it goes through LLVM's multiple compilation stages, starting from a

syntactic construct in the source language and until being encoded as binary machine

code in an output object file.

本 article でわたしがやろうとしているのは、ソース言語の syntactic construct から始まって

出力オブジェクトファイルの binary machine code として encode されるまでの LLVM の

multiple compilation を通じた various incarnations an "instruction"

を追いかけるということです。

This article in itself will not teach one how LLVM works. It assumes some existing

familiarity with LLVM's design and code base, and leaves a lot of "obvious"

details out. Note that unless otherwise stated, the information here is relevant to

LLVM 3.2. LLVM and Clang are fast-moving projects, and future changes may render parts

of this article incorrect. If you notice any discrepancies, please let me know and I'll

do my best to fix them.

本 article そのものは LLVM がどのように動作しているのかを教えるものではありません。

読み手が LLVM の design や code base について some existing familiarity であることを仮定していて、

「明らかな」details の多くについては触れません。特に注釈を入れない限り、ここで述べる情報は LLVM 3.2

についてのものです。LLVM と Clang は fast-moving projects であり、render parts が将来変更されて

本 article の内容が正しいものでなくなる可能性があります。

If you notice any discrepancies, please let me know and I'll do my best to fix them.

Input code

入力コード

I want to start this exploration process at the beginning – C source. Here's the

simple function we're going to work with:

ではまず、処理の始まりである C で書かれたソースコードから始めましょう。

以下に示した単純な関数を使って説明します

int foo(int aa, int bb, int cc) {

int sum = aa + bb;

return sum / cc;

}

The focus of this article is going to be on the division operation.

本 article の focus はこの除算操作へと移ります。

Clang

Clang serves as the front-end for LLVM, responsible for converting C, C++ and ObjC source into

LLVM IR. Clang's main complexity comes from the ability to correctly parse and semantically

analyze C++; the flow for a simple C-level operation is actually quite straightforward.

Clang は、C や C++、Ojbective C のソースを LLVM IR への変換を行う LLVM のフロントエンドとして

serves します。Clang の複雑性は主に C++ を正しく parse して semantilally な analyze をする能力

に由来しています。単純な C レベルの operation に対するフローはとても straightforward なものです。

Clang's parser builds an Abstract Syntax Tree (AST) out of the input. The AST is the main

"currency" in which various parts of Clang deal. For our division operation, a

BinaryOperator node is created in the AST, carrying the BO_div "operator kind" [1].

Clang's code generator then goes on to emit a sdiv LLVM IR instruction from the node, since this

is a division of signed integral types.

Clang の parser は入力に対する出力として抽象構文木 (Abstract Syntax Tree (AST)) を build します。

この AST は Clang が扱う様々なパーツの main 「currency」 です。

わたしたちの除算操作に対しては、この AST 中に BO_div "operator kind" [1] を

carry する BinaryOperator node がひとつ生成されます。

Clang のコードジェネレーターはそれから、符号つき整数型の除算のための sdiv LLVM IR instrcution を

生成されたそのノードから emit します。

LLVM IR

Here is the LLVM IR created for the function [2]:

先の関数から生成された LLVM IR はこのようになります

define i32 @foo(i32 %aa, i32 %bb, i32 %cc) nounwind {

entry:

%add = add nsw i32 %aa, %bb

%div = sdiv i32 %add, %cc

ret i32 %div

}

In LLVM IR, sdiv is a BinaryOperator, which is a subclass of Instruction with the opcode SDiv [3].

Like any other instruction, it can be processed by the LLVM analysis and transformation passes.

For a specific example targeted at SDiv, take a look at SimplifySDivInst. Since all through the

LLVM "middle-end" layer the instruction remains in its IR form, I won't spend much time

talking about it. To witness its next incarnation, we'll have to look at the LLVM code generator.

LLVM IR 中では sdiv は Instruction のサブクラスである BinaryOperator である opecode SDiv [3] です。

ほかの命令と同様に、これは LLVM analysis と transformation passes によって処理が可能です。

SDiv をターゲットとする specific example として SimplifySDivInst を取りあげます。

LLVM の "middle-end" layer 全体を通じて insruction は IR form であり続けるので、

それについては詳しく述べません。

To witness its next incarnation, LLVM コードジェネレーターを見る必要があります。

The code generator is one of the most complex parts of LLVM. Its task is to "lower" the

relatively high-level, target-independent LLVM IR into low-level, target-dependent "machine

instructions" (MachineInstr). On its way to a MachineInstr, an LLVM IR instruction passes through

a "selection DAG node" incarnation, which is what I'm going to discuss next.

コードジェネレーターは LLVM の最も複雑な parts のひとつです。コードジェネレーターの task は

high-level な target-independent LLVM IR を相対的に 「lower」 level な target-dependent の

「machine instructions」(MachineInstr) とすることです。MachineInstr への変換では、LLVM IR

instruction は「selection DAG node」incarnation を passess through します。このことは次に論じます。

Selection DAG node

Selection DAG [4] nodes are created by the SelectionDAGBuilder class acting "at the service of"

SelectionDAGISel, which is the main base class for instruction selection. SelectionDAGIsel goes

over all the IR instructions and calls the SelectionDAGBuilder::visit dispatcher on them. The

method handling a SDiv instruction is SelectionDAGBuilder::visitSDiv. It requests a new SDNode

from the DAG with the opcode ISD::SDIV, which becomes a node in the DAG.

Selection DAG ノード [4] は SelectionDAGISel のサービスを実行する SelectionDAGBuilder クラスに

よって生成されます。このクラスは instrucion selection のための main base クラスです。

SelectionDAGISel はすべての IR instructions を over all して、

それぞれの instruction に対して SelectionDAGBuilder::visit dispatcher を呼び出します

SDiv instruction を handle するメソッドが SelectionDAGBuilder::visitSDiv です。

このメソッドは DAG 中で node となる、DAG with opcode ISD::SDIV からの新しい SDNode を要求します。

The initial DAG constructed this way is still only partially target dependent. In LLVM

nomenclature it's called "illegal" – the types it contains may not be directly

supported by the target; the same is true for the operations it contains.

この方法で構築された initial DAG はまだ部分的にしか target dependent ではありません。

LLVM nomenclature ではこれは "illegal" と呼ばれています。

- the types it contains may not be directly supported by the target;

the same is true for the operations it contains.

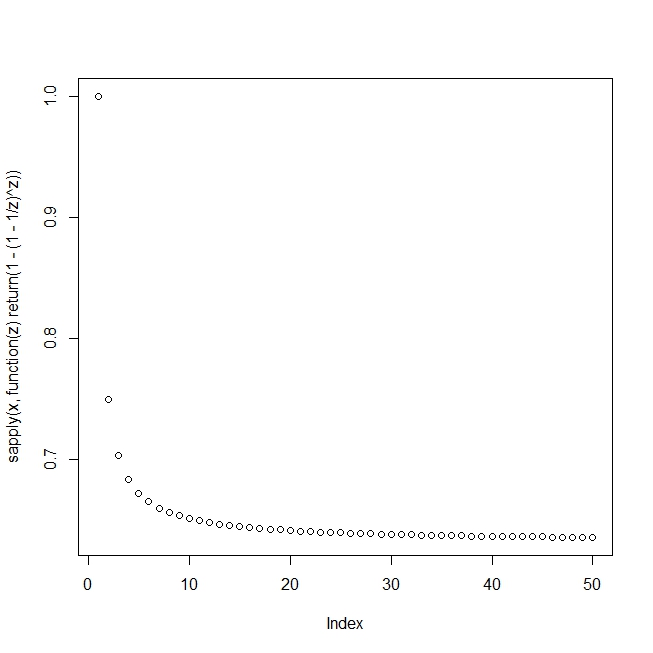

There are a couple of ways to visualize the DAG. One is to pass the -debug flag to llc,

which will cause it to create a textual dump of the DAG during all the selection

phases. Another is to pass one of the -view options which causes it to dump and

display an actual image of the graph (more details in the code generator docs). Here'

s the relevant portion of the DAG showing our SDiv node, right after DAG creation (the

sdiv node is in the bottom):

DAG の visualize には二つのやり方があります。ひとつは llc に -debug フラグを渡すというものです。

これは selection phases すべてにおいて DAG の textual dump を生成させます。もう一つは graph の

actual image の damp と display を実行させる -view オプションの一つを渡すというものです

(もっと詳しいことはコードジェネレーターのドキュメントを参照してください)。

Here's the relevant portion of the DAG showing our SDiv node,

right after DAG creation (the sdiv node is in the bottom):

http://eli.thegreenplace.net/wp-content/uploads/2012/11/sdiv_initial_dag.png

Before the SelectionDAG machinery actually emits machine instructions from DAG nodes,

these undergo a few other transformations. The most important are the type and

operation legalization steps, which use target-specific hooks to convert all operations and

types into ones that the target actually supports.

SelectionDAG が machinery actually に DAG ノードから machine instructions を emit する前にいくつ

かの other transformatiosn が陰で行われています。そのうち最も重要なものが型と operation の

legalization steps です。このステップはすべての operations と型を target が実際にサポートしてい

るものへと変換するために target-specific hooks を使います。

"Legalizing" sdiv into sdivrem on x86

The division instruction (idiv for signed operands) of x86 computes both the quotient and the

remainder of the operation, and stores them in two separate registers. Since LLVM's instruction

selection distinguishes between such operations (called ISD::SDIVREM) and division that only

computes the quotient (ISD::SDIV), our DAG node will be "legalized" during the DAG

legalization phase when the target is x86. Here's how it happens.

x86 の除算命令 (符号つきオペランドに対する idiv) は商と剰余の両方を一度に計算し、それらをふたつの

別々のレジスターに格納します。LLVM の instruction selection はそういった (ISD::SDIVREM と呼ばれる)

operation と商だけを計算する (ISD::SDIV という) instruction とを区別しますから、先の DAG node は

target が x86 であるときに DAG legalization phase で「legalize」されます。

Here's how it happens.

An important interface used by the code generator to convey target-specific information to the

generally target-independent algorithms is TargetLowering. Targets implement this interface to

describe how LLVM IR instructions should be lowered to legal SelectionDAG operations. The x86

implementation of this interface is X86TargetLowering [5]. In its constructor it marks which

operations need to be "expanded" by operation legalization, and ISD::SDIV is one of them.

Here's an interesting comment from the code:

target-specific な情報を generally target-independent algorithms へ伝えるためにコードジェネレー

ターによって使用される重要なインターフェースが TargetLowering です。ターゲットは、LLVM IR

instructions をどのように legal SelectionDAG operations に lower すべきかを記述するためにこの

インターフェースを実装します。このインターフェースの x86 implementaion は X86TargetLowering [5]

です。そのコンストラクター中では、operation legalization によって "expanded" する必要

のある operations をマークします。ISD::SDIV はそうやってマークされたものの一つです。

以下に、該当のコードにあった興味深いコメントを示します:

// Scalar integer divide and remainder are lowered to use operations that

// produce two results, to match the available instructions. This exposes

// the two-result form to trivial CSE, which is able to combine x/y and x%y

// into a single instruction.

スカラー整数除算と remainder は、利用できる instruction にマッチするように

二つの結果を生成する operations を使うため lowered されます。

この exposes は二つの結果(商と剰余)を、 x/y と x%y とをひとつの instruction に

combine できるような trivial CSE にします。

When SelectionDAGLegalize::LegalizeOp sees the Expand flag on a SDIV node [6] it

replaces it by ISD::SDIVREM. This is an interesting example to demonstrate the

transformation an operation can undergo while in the selection DAG form.

SelectionDAGLegalize::LegalizeOp は SDIV node [6] に Expand flag を認めたとき、そのノードを

ISD::SDIVREM に置き換えます。これはある operation の transformation が、Selection DAG form

であっても可能であることを demonstrate する interesting example です。

Instruction selection – from SDNode to MachineSDNode

The next step in the code generation process [7] is instruction selection. LLVM provides a generic

table-based instruction selection mechanism that is auto-generated with the help of TableGen. Many

target backends, however, choose to write custom code in their SelectionDAGISel::Select

implementations to handle some instructions manually. Other instructions are then sent to the

auto-generated selector by calling SelectCode.

コード生成プロセス [7] の次のステップは instruction selection です。LLVM は、 TableGen の助けに

よって自動生成される generic table-based instruction selection mechanism を提供します。

Many target backedns ではしかしながら、いくつかの instructions を manually に handle する

SelectionDAGISel::Select の実装でカスタムコードを書くことを選択しています。

other instructions はそれから、SelectCode の呼び出しによって auto-generate されたセレクターへ

送られます。

The X86 backend handles ISD::SDIVREM manually in order to take care of some special cases and

optimizations. The DAG node created at this step is a MachineSDNode, a subclass of SDNode which

holds the information required to construct an actual machine instruction, but still in DAG node

form. At this point the actual X86 instruction opcode is selected – X86::IDIV32r in our case.

X86 の backend では、一部の特殊なケースを取り扱うためと最適化のために ISD::SDIVREM を manually に

handle しています。このステップで生成された DAG node は SDNode のサブクラス MachineSDNode で、

実際の machine instruction を構築するのに必要な情報を保持していますがまだ DAG node form のままです。

この時点で実際の X86 instrucion opcode 、わたしたちのケースでは X86::IDIV32r が選択されます。

Scheduling and emitting a MachineInstr

スケジューリングと MachineInstr の emitting

The code we have at this point is still represented as a DAG. But CPUs don't execute

DAGs, they execute a linear sequence of instructions. The goal of the scheduling step

is to linearize the DAG by assigning an order to its operations (nodes). The simplest

approach would be to just sort the DAG topologically, but LLVM's code generator

employs clever heuristics (such as register pressure reduction) to try and produce a

schedule that would result in faster code.

ここでのわたしたちのコードはまだ DAG として represent されています。しかし CPU は DAGs を実行しま

せん。CPU が実行するのは instructions の linear sequence です。このスケジューリングステップの目標

は、assigning an order to its operations (nodes) による DAG の linearize です。もっとも単純なアプ

ローチは DAG をトポロジカル的にソートしてしまうことですが、LLVM のコードジェネレーターはより高速な

コードを得られるように try and produce するもっと賢い (egister pressure reduction のような)

heuristics を employ しています。

Each target has some hooks it can implement to affect the way scheduling is done. I won't dwell

on this topic here, however.

各 target は affect the way scheduling is done のための implement が可能ないくつかの hook を持っています。

Finally, the scheduler emits a list of instructions into a MachineBasicBlock, using

InstrEmitter::EmitMachineNode to translate from SDNode. The instructions here take the

MachineInstr form ("MI form" from now on), and the DAG can be destroyed.

最終的に scheduler は SDNode への変換に InstrEmitter::EmitMachineNode を使って、

instructions のリストを MachineBasicBlock に emit します。

ここで instructions は MachineInstr form (以後 "MI form" と表記)となり、

DAG は破棄可能になります。

We can examine the machine instructions emitted in this step by calling llc with the

-print-machineinstrs flag and looking at the first output that says "After

instruction selection":

このステップでは -print-machineinstrs フラグつきで llc を呼び出して、その出力の最初にある

"After instruction selection" を見ることで emit された machine instructions の

検査ができます。

# After Instruction Selection:

# Machine code for function foo: SSA

Function Live Ins: %EDI in %vreg0, %ESI in %vreg1, %EDX in %vreg2

Function Live Outs: %EAX

BB#0: derived from LLVM BB %entry

Live Ins: %EDI %ESI %EDX

%vreg2<def> = COPY %EDX; GR32:%vreg2

%vreg1<def> = COPY %ESI; GR32:%vreg1

%vreg0<def> = COPY %EDI; GR32:%vreg0

%vreg3<def,tied1> = ADD32rr %vreg0<tied0>, %vreg1, %EFLAGS<imp-def,dead>; GR32:%vreg3,%vreg0,%vreg1

%EAX<def> = COPY %vreg3; GR32:%vreg3

CDQ %EAX<imp-def>, %EDX<imp-def>, %EAX<imp-use>

IDIV32r %vreg2, %EAX<imp-def>, %EDX<imp-def,dead>, %EFLAGS<imp-def,dead>, %EAX<imp-use>, %EDX<imp-use>; GR32:%vreg2

%vreg4<def> = COPY %EAX; GR32:%vreg4

%EAX<def> = COPY %vreg4; GR32:%vreg4

RET

# End machine code for function foo.

Note that the output mentions that the code is in SSA form, and we can see that some

registers being used are "virtual" registers (e.g. %vreg1).

出力されたコードが SSA form で行われていて、一部のレジスターには「仮想レジスター」

(たとえば %vreg1 のような) が使われていることに注意してください。

Register allocation – from SSA to non-SSA machine instructions

レジスター割り付け - SSA から non-SSA machine instructions へ

Apart from some well-defined exceptions, the code generated from the instruction selector is in

SSA form. In particular, it assumes it has an infinite set of "virtual" registers to act

on. This, of course, isn't true. Therefore, the next step of the code generator is to invoke a

"register allocator", whose task is to replace virtual by physical registers, from the

target's register bank.

一部の well-defined exceptions は別として、instruction selector によって生成されたコードは

SSA form をしています。

In particular,

SSA form では無限個の「仮想レジスター」を持っていると仮定されています。

もちろんこれ(無限このレジスターがあること)は真実ではありません。したがって

code generator の next step は、

ターゲットの register bank を基に仮想レジスターを物理レジスターに置き換える

task を持った "register allocator" の invoke です。

The exceptions mentioned above are also important and interesting, so let's talk

about them a bit more.

先に言及した例外もまた、重要かつ interesting なものですから、

それらについてもう少し述べることにしましょう。

Some instructions in some architectures require fixed registers. A good example is our division

instruction in x86, which requires its inputs to be in the EDX and EAX registers. The instruction

selector knows about these restrictions, so as we can see in the code above, the inputs to IDIV32r

are physical, not virtual registers. This assignment is done by X86DAGToDAGISel::Select.

一部のアーキテクチャには固定されたレジスター群を要求するような命令があったりします。

その良い例が x86 の除算命令で、これは EDX レジスターと EAX レジスターを要求します。

instruction selector はこういった制限について知っています。

IDIV32r に対する入力は物理レジスターであって 仮想レジスターではないことから

先述したコードでこのことが確認できます。

この代入は X86DAGToDAGISel::Select によって行われます。

The register allocator takes care of all the non-fixed registers. There are a few more

optimization (and pseudo-instruction expansion) steps that happen on machine

instructions in SSA form, but I'm going to skip these. Similarly, I'm not going to

discuss the steps performed after register allocation, since these don't change the

basic form operations appear in (MachineInstr, at this point). If you're interested,

take a look at TargetPassConfig::addMachinePasses.

レジスター allocator は non-fixed なレジスター群すべての面倒をみます。

さらにいくつかの SSA form 中の machine instructions に対して行われる最適化

(と疑似 instruction expansion の)ステップがありますが、ここではそれをスキップします。

また、register allocation のあとで行われるステップについても論じません。

なぜなら、これらのステップでは現れた operations の basic form (ここでは MachieInstr)

を変えることはしないからです。

もしそのことに興味があるのなら、TargetPassConfig::addMachinePasses を調べてみてください。

Emitting code

コードの emitting

So we now have our original C function translated to MI form – a MachineFunction

filled with instruction objects (MachineInstr). This is the point at which the code

generator has finished its job and we can emit the code. In current LLVM, there are

two ways to do that. One is the (legacy) JIT which emits executable, ready-to-run code

directly into memory. The other is MC, which is am ambitious object-file-and-assembly

framework that's been part of LLVM for a couple of years, replacing the previous

assembly generator. MC is currently being used for assembly and object file emission

for all (or at least the important) LLVM targets. MC also enables "MCJIT",

which is a JIT-ting framework based on the MC layer. This is why I'm referring to

LLVM's JIT module as legacy.

さてここまでで元の C で書かれた関数から MI form へと変換されました。

この form は instruction objects (MachineInstr) で fill された MachineFunction です。

ここはコードジェネレーターがその job を完了させる場所で、コードのemitができます。

現状の LLVM ではコードを emit するのに二通りのやり方があります。

一つは実行可能で ready-to-run なコードをメモリーへ emit する (legacyな) JIT です。

もう一つは MC で、これは二年ほど前にLLVM の一部となった ambitious な

以前の assembly ジェネレーターを置き換える object-file-and-assembly framework です。

MC は現状LLVM がターゲットとしているすべて(少なくともその重要なもの)に対する

assembly and object file emission で使われています。

MC はまた MC layer に基礎を置くJIT-ting framework である「MCJIT」も有効にします。

これがわたしがJIT モジュールを legacy とみなした理由です。

I will first say a few words about the legacy JIT and then turn to MC, which is more

universally interesting.

まず初めに legacy JIT について簡単に述べた後、より universally interesting な MC へ移ります。

The sequence of passes to JIT-emit code is defined by LLVMTargetMachine::addPassesToEmitMachineCode.

It calls addPassesToGenerateCode, which defines all the passes required to do what most of this

article has been talking about until now – turning IR into MI form. Next, it calls addCodeEmitter,

which is a target-specific pass for converting MIs into actual machine code. Since MIs are already

very low-level, it's fairly straightforward to translate them to runnable machine code [8]. The x86

code for that lives in lib/Target/X86/X86CodeEmitter.cpp. For our division instruction there's no

special handling here, because the MachineInstr it's packaged in already contains its opcode and

operands. It is handled generically with other instructions in emitInstruction.

JIT-emit code へ渡される sequence は LLVMTargetMachine::addPassesToEmitMachineCode で定義されて

います。これは本 article でここまでに述べたことの大部分で必要となる pass のすべてを定義している

addPassesToGenerateCode を呼び出して IR を MI form に変換します。続いて MIs を actual machine

code へ convert する targey-specfic な pass である addCodeEmitter を呼び出します。MIs はすでに

非常に low-level なので、MIs を runnable machine code [8] へ変換するのは fairly straightforward

です。変換のための x86 コードは lib/Target/X86/X86CodeEmitter.cpp にあります。

MadchineInstr はすでに その opcode と operand とを保持するように package されているので、

わたしたちの除算命令のための special handling はここではありません。

他の instructions と一緒に emitInstruction によって generically に handle されます。

MCInst

When LLVM is used as a static compiler (as part of clang, for instance), MIs are passed down to

the MC layer which handles the object-file emission (it can also emit textual assembly files).

Much can be said about MC, but that would require an article of its own. A good reference is

this post from the LLVM blog. I will keep focusing on the path a single instruction takes.

LLVM は static compiler として使われた場合 (たとえば clang の一部として)、MIs は object-file

emission (これはtextual assembly files の emit も可能です) を処理する MC layer へ pass down

されます。MC について言及できることは多々あるのですが、それをするには独立した article が必要

となります。よい reference は LLVM blog のこの post です。

I will keep focusing on the path a single instruction takes.

LLVMTargetMachine::addPassesToEmitFile is responsible for defining the sequence of actions

required to emit an object file. The actual MI-to-MCInst translation is done

in the EmitInstruction of the AsmPrinter interface. For x86, this method is

implemented by X86AsmPrinter::EmitInstruction, which delegates the work to the

X86MCInstLower class. Similarly to the JIT path, there is no special handling for our

division instruction at this point, and it's treated generically with other

instructions.

LLVMTargetMachine::addPassesToEmitFile はオブジェクトファイルを emit するのに必要となる

sequence of action の定義に対して responsible です。実際の MI-to-MCInst translation は

AsmPrinter インターフェースの EmitInstruction で行われます。

このインターフェースは X86MCInstLower クラスへ作業を delegate します。

JIT path と同様に、この段階ではわたしたちの除算命令を特別にhandling するものはなく、

他の命令と generically に取り扱われます。

By passing -show-mc-inst to llc, we can see the MC-level instructions it creates,

alongside the actual assembly code:

llc に -show-mc-inst を渡すことにより

生成された MC-level の instructions を見ることが可能になります。

foo: # @foo

# BB#0: # %entry

movl %edx, %ecx # <MCInst #1483 MOV32rr

# <MCOperand Reg:46>

# <MCOperand Reg:48>>

leal (%rdi,%rsi), %eax # <MCInst #1096 LEA64_32r

# <MCOperand Reg:43>

# <MCOperand Reg:110>

# <MCOperand Imm:1>

# <MCOperand Reg:114>

# <MCOperand Imm:0>

# <MCOperand Reg:0>>

cltd # <MCInst #352 CDQ>

idivl %ecx # <MCInst #841 IDIV32r

# <MCOperand Reg:46>>

ret # <MCInst #2227 RET>

.Ltmp0:

.size foo, .Ltmp0-foo

The object file (or assembly code) emission is done by implementing the MCStreamer interface.

Object files are emitted by MCObjectStreamer, which is further subclassed according to the actual

object file format. For example, ELF emission is implemented in MCELFStreamer. The rough path a

MCInst travels through the streamers is MCObjectStreamer::EmitInstruction followed by a

format-specific EmitInstToData. The final emission of the instruction in binary form is, of

course, target-specific. It's handled by the MCCodeEmitter interface (for example

X86MCCodeEmitter). While in the rest of LLVM code is often tricky because it has to make a

separation between target-independent and target-specific capabilities, MC is even more

challenging because it adds another dimension – different object file formats. So some code is

completely generic, some code is format-dependent, and some code is target-dependent.

このオブジェクトファイル (もしくはアセンブリコード)の emission は、実際のオブジェクトファイルの

フォーマットに従ってサブクラス化されている MCStremer インターフェースの実装によって行われます。

たとえば ELF の emission は MCELFStreamer に実装されています。

MCInst travels through the streamers の rough path は

followed by a format-specific EmitInstToData な MCObjectStreamer::EmitInstruction です。

binary form の instruction への final emission はもちろん target-specific です。

その emission は MCCodeEmitter インターフェース (たとえば X86MCCodeEmitter) によって handle されます。

target-independent な capabilities と target-specific な capabilities とを分割しなければならないので

LLVM の残りのコードはしばしば tricky なものですが、MC は異なるオブジェクトファイルという別の次元

を加えているためにより一層 challenging なものになっています。

ですから、一部のコードは completely generic であり一部のコードは format-dependent であり、

一部のコードは target-depndent なのです。

Assemblers and disassemblers

アセンブラーと逆アセンブラー

A MCInst is deliberately a very simple representation. It tries to shed as much semantic information

as possible, keeping only the instruction opcode and list of operands (and a source location for

assembler diagnostics). Like LLVM IR, it's an internal representation will multiple possible

encodings. The two most obvious are assembly (as shown above) and binary object files.

MCInst は意図的 (deliberateky) に非常に単純な表現になっていて、

可能な限り much semantic information を取り除いて instruction opcode と list of operands

(さらに a source location for assembler diagnostics) のみを保持するよう試みます。

LLVM IR と同様に内部表現は mulitple possible encodings になり得ます。

そのもっとも明らかな二つのエンコーディングは、

アセンブリと (これまでに見たような) バイナリオブジェクトファイルです。

llvm-mc is a tool that uses the MC framework to implement assemblers and disassemblers.

Internally, MCInst is the representation used to translate between the binary and

textual forms. At this point the tool doesn't care which compiler produced the

assembly / object file.

llvm-mc はアセンブラーと逆アセンブラーを実装するのに MC framework を使っているツールです。

内部的には、MCInst は binary form と textual form との間の translate で使われる表現です。

この時点ではツールはコンパイラーがアセンブリファイルかオブジェクトファイルのいずれを

生成するのかについては doesn't care です。

http://eli.thegreenplace.net/wp-content/uploads/hline.jpg

[1] To examine the AST created by Clang, compile a source file with the -cc1 -ast-dump options.

Clang によって生成された AST を検査するために、-cc1 --ast-dump オプションをつけて

ソースファイルをコンパイルします

[2] I ran this IR via opt -mem2reg | llvm-dis in order to clean-up the spills.

spills を clean-up するために -mem2reg | llvm-dis をつけてこの IR を実行しました

[3] These things are a bit hard to grep for because of some C preprocessor hackery employed

by LLVM to minimize code duplication. Take a look at the include/llvm/Instruction.def file

and its usage in various places in LLVM's source for more insight.

これらのことがらは LLVM の code duplication を minimize するためにいくつかの

C プリプロセッサーが hackery employ されているために grep するのが困難です。

include/llvm/Instruction.def というファイルと、その使われ方に注目してください

[4] A DAG here means Directed Acyclic Graph, which is a data structure LLVM code generator

uses to represent the various operations with the values they produce and consume.

ここでは DAG は Directed Acyclic Graph で、LLVM code generator が

値を produce and consume するさまざまな operations を表現すのに使うデータ構造です。

[5] Which is arguably the single scariest piece of code in LLVM.

[6] This is an example of how target-specific information is abstracted to guide the

target-independent code generation algorithm.

これは target-independent な code generation algorithm を guide するために

target-specific な information がどのように抽象化されているかの実例です

[7] The code generator performs DAG optimizations between its major steps, such as between

legalization and selection. These optimizations are important and interesting to know

about, but since they act on and return selection DAG nodes, they're out of the focus of

this article.

code generator は legalization と selection の間のような major step の間で

DAG optimizations を行います。このような optimizatios がどういったものかを

知ることは重要かつ interesting ですが、その最適化は selection DAG nodes に

対して行って selection DAG nodes を返すので本 article の focus から外れます。

[8] When I'm saying "machine code" at this point, I mean actual bytes in a buffer,

representing encoded instructions the CPU can run. The JIT directs the CPU to execute code

from this buffer once emission is over.

ここでわたしが "machine code" と言った場合、

それはバッファーにある CPU が実行可能な encoded instructions である actual bytes を指します。

JIT は once emission is over なこのバッファーから code を実行するように

CPU に指示します。

Related posts:

life by Yudkowsky: transhumanism, singularity

Book review: “Artificial Life” by Steven Levy

Book review: “Man and woman – intimate life” by S. Shnabl

Book review: “Life of Pi” by Yann Martel

SICP section 5.2

This entry was posted on Saturday, November 24th, 2012 at 15:37 and is filed under Compilation.

![EMOTION the Best 機動警察パトレイバー2 the Movie [DVD]](http://ecx.images-amazon.com/images/I/411j6aYUDYL._SL160_.jpg)